How Platform Engineering Enables Scalable Data Systems

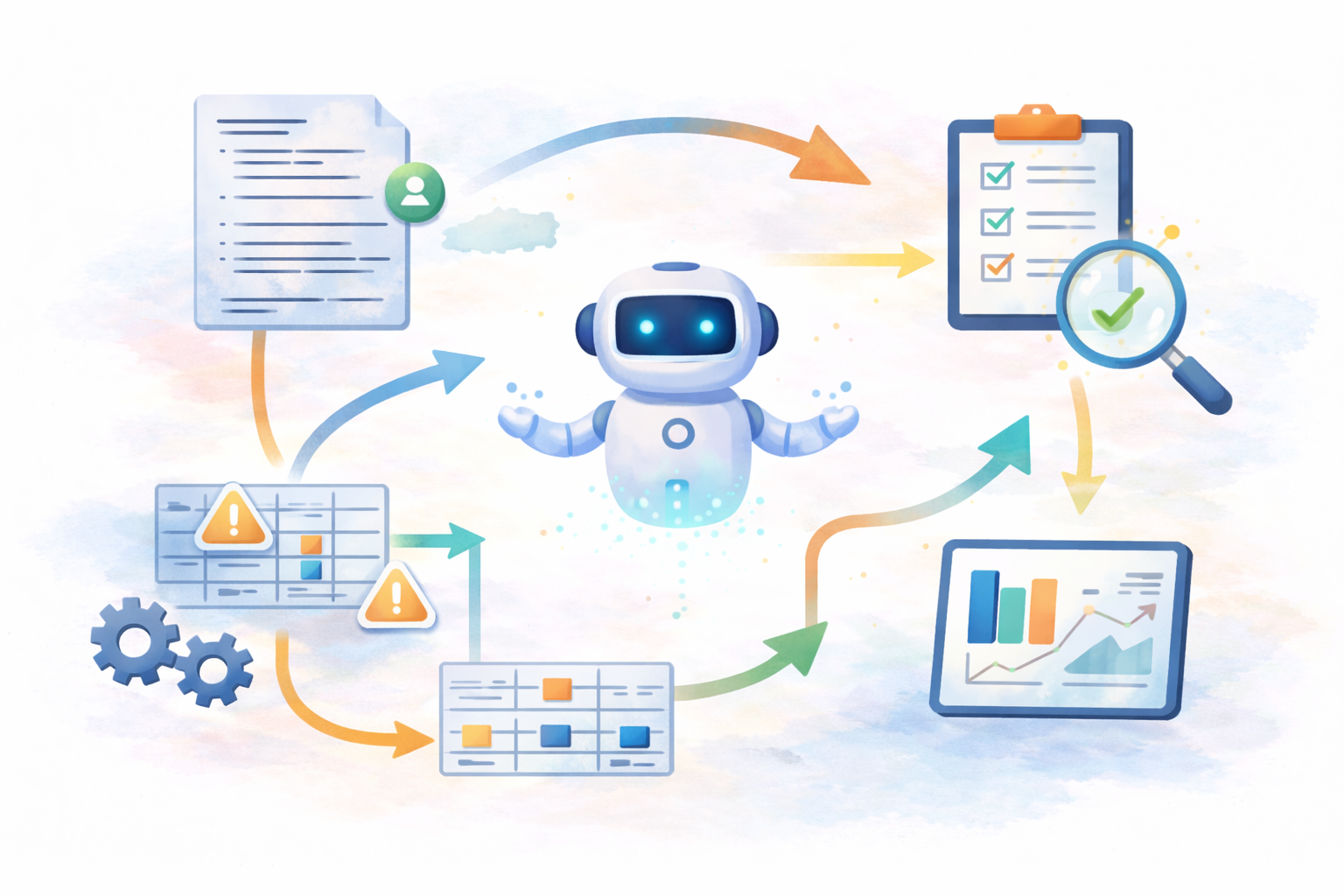

Image created by the author

The Limits of Traditional DevOps

Platform engineering emerged as organizations reached the limits of traditional DevOps models. Traditional DevOps encouraged teams to own infrastructure and delivery end-to-end, and this autonomy worked well at a small scale. However, the effort of maintaining software began to outpace building it as organizations scaled and cloud-native systems grew more complex. Maintaining infrastructure overtook product progress over time, increasing overhead and reducing consistency, security, and reliability.

Platform engineering addresses this by introducing shared internal systems that standardize how work is built and operated. Platform teams provide supported building blocks that teams can rely on rather than asking each team to assemble and maintain its own stack.

What a Platform Actually Is

A platform is a shared internal system treated as a product that standardizes how software is built and operated; many organizations refer to this as an internal developer platform (IDP). It provides self-service building blocks such as infrastructure definitions, pipeline scaffolding, and deployment workflows, expressed as versioned configuration; this way teams don’t need to recreate them or wait on manual provisioning. The platform abstracts operational complexity and reduces cognitive load for developers by packaging infrastructure as a product.

💡 Think of a platform team as a startup building an IDP for an internal market.

Who the Platform Serves

The primary customers of a platform team are the developers who build and operate software inside the organization. The platform exists to make their work safer, faster, and more predictable. In this sense, the platform team functions as a product team serving internal users.

There are also secondary customers: operations, security, and compliance functions that depend on the platform to enforce standards consistently. Leadership relies on it to improve reliability and reduce organizational risks. These benefits are achieved indirectly through a better developer experience.

The main point is that platform engineering is not DevOps re-branded. It is a fundamental shift in how we think about infrastructure and developer productivity.

Traditional ops thinking is:

- How do we keep systems running?

- How do we respond to requests?

- How do we manage infrastructure?

Platform engineering thinking is:

- How do we treat infrastructure as a product?

- How do we enable developer self-service?

- How do we reduce cognitive load for developers?

Applying Platform Engineering to Data Systems

These principles address similar problems when applied to data systems. Data work often involves high variability in inputs, schemas, and outputs. This makes full reuse difficult. While data may vary, the systems that move, process, and operate it often follow the same patterns.

Platform engineering for data focuses on standardizing how pipelines, infrastructure, and operational concerns are handled. This includes how pipelines are created, how infrastructure is provisioned, how environments are configured, and how security and monitoring are handled. The result is a consistent foundation that supports diverse data use cases.

Here is a short “before vs after” contrast:

Before platform engineering:

- Each team builds pipelines differently

- Security reviews happen late

- Monitoring is inconsistent

- Environments drift

After platform engineering:

- Teams start from a template

- Guardrails are built in

- Environments are reproducible

- Ops burden is centralized

What This Looks Like in Practice

This can look like defining cloud data warehouse infrastructure using infrastructure-as-code so environments, roles, and permissions are created the same way every time. It may also include providing version-controlled pipeline templates that teams initialize when starting ingestion or transformation work, enforcing access patterns through shared identity and role modules, and applying standardized monitoring and security instrumentation through reusable platform components so teams do not need to implement these concerns individually.

💡 The platform team defines the scaffolding and declarative configuration. Product teams supply the intent and business logic.

Shifting operational and security concerns earlier in the process is a key aspect of this approach. Instead of relying on manual reviews or team-specific implementations, security and compliance requirements are encoded directly into platform defaults. Teams move faster because guardrails are built into the systems they start with.

Why This Model Scales

This model becomes increasingly necessary as data environments continue to grow in size and complexity. It reduces duplication, clarifies ownership of shared concerns, and allows data teams to focus on problems that are specific to their data rather than rebuilding foundational systems.

Data platform engineering enables scalable data systems by separating what must remain flexible from what should be standardized in practice. That distinction is what allows data teams to operate reliably at scale.